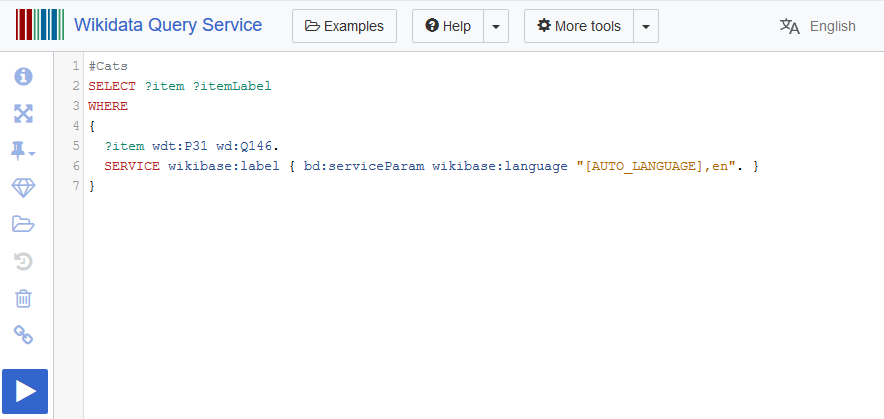

The Wikidata query service is a public SPARQL endpoint for querying all of the data contained within Wikidata. In a previous blog post I walked through how to set up a complete copy of this query service. One of the steps in this process is the munge step. This performs some pre-processing on the RDF dump that comes directly from Wikidata.

Back in 2019 this step took 20 hours and now takes somewhere between 1-2 days as Wikidata has continued to grow. The original munge step (munge.sh) makes use of only a single CPU. The WMF has been experimenting for some time with performing this step in their Hadoop cluster as part of their modern update mechanism (streaming updater). An additional patch has now also made this useful for the current default load process (using loadData.sh).

This post walks through using the new Hadoop based munge step with the latest Wikidata TTL dump on Google clouds Dataproc service. This cuts the munge time down from 1-2 days to just 2 hours using an 8 worker cluster. Even faster times can be expected with more workers, all the way down to ~20 minutes.