SMWCon 2021 is happening as I write this post. I was invited to give a short talk as part of a MediaWiki and Docker workshop organized by Cindy Cicalese on day 2. As I am writing a month of blog posts I’m going to turn my slides into a more digestible and searchable online blog post.

The original slides can still be found on Google Slides, and when the conference recording is up you should find it on the associated event page.

Disclaimer

- mediawiki-docker-dev was created at a hackathon Wikimedia Deutschland paid for me to attend

- mediawiki-docker-dev CLI experimentation was done in my Wikimedia Deutschland working time

- The mwcli tool is owned by the WMF Release engineering time (mediawiki.org, gitlab)

- My mediawiki-docker-dev port to mwcli was done in my volunteer time

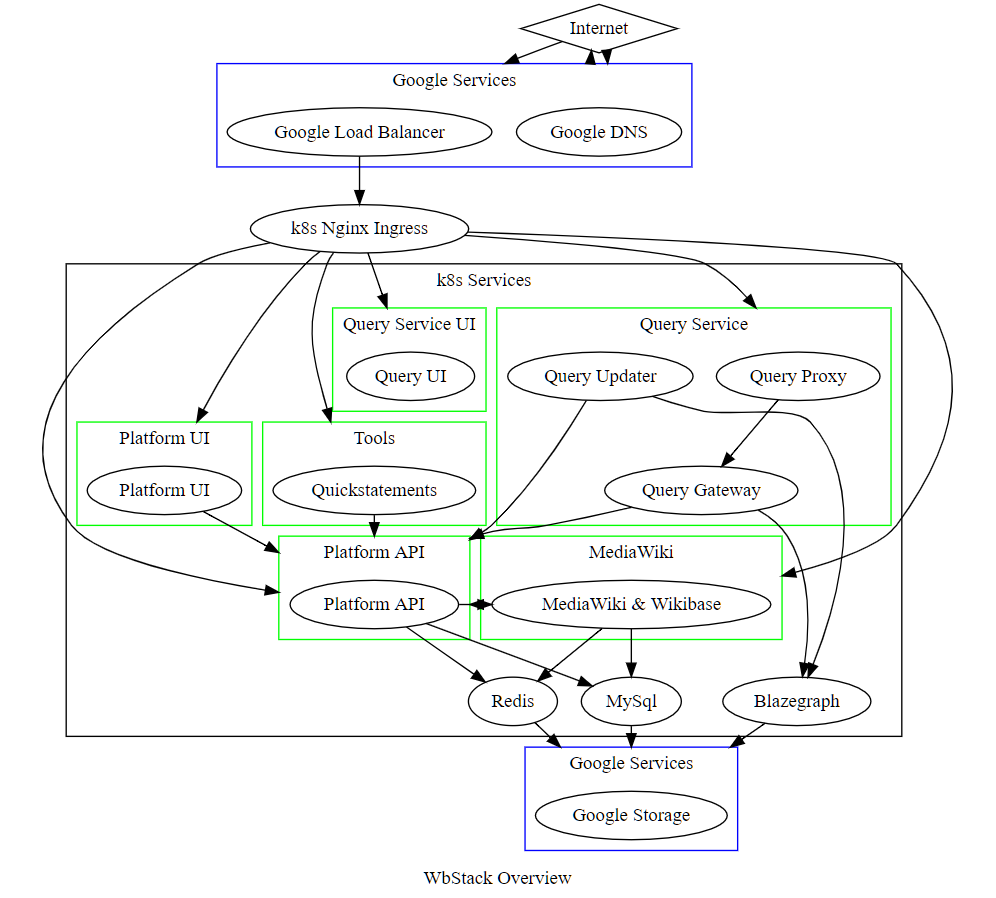

- WBStack was created by me in my volunteer time

- WBaaS will be run by WMDE in 2021