SMWCon 2021 is happening as I write this post. I was invited to give a short talk as part of a MediaWiki and Docker workshop organized by Cindy Cicalese on day 2. As I am writing a month of blog posts I’m going to turn my slides into a more digestible and searchable online blog post.

The original slides can still be found on Google Slides, and when the conference recording is up you should find it on the associated event page.

Disclaimer

- mediawiki-docker-dev was created at a hackathon Wikimedia Deutschland paid for me to attend

- mediawiki-docker-dev CLI experimentation was done in my Wikimedia Deutschland working time

- The mwcli tool is owned by the WMF Release engineering time (mediawiki.org, gitlab)

- My mediawiki-docker-dev port to mwcli was done in my volunteer time

- WBStack was created by me in my volunteer time

- WBaaS will be run by WMDE in 2021

mediawiki-docker-dev

mediawiki-docker-dev (or MWDD) was a development environment for MediaWiki, based on Docker and docker-compose built in 2017 as a hackathon project. It started off more as a “testing system” for multiple PHP versions, but it quickly became apparent it was quite nice for a quick development setup.

It was never intended to be a development environment, and thus had a number of things left to be desired. But on the whole, people enjoyed using it.

- 167 line docker-compose file

- Bash scripts wrappers around docker-compose commands

- Services

- Internal DNS

- Reverse proxy

- MediaWiki

- SQL DB (with replication)

- PHPMyAdmin

- Graphite & Statsd

- Redis

- Lifecycle

- Various setup bits

./create./addsite default./destroy

CLI experimentation

While we were investigating development environments in general at Wikimedia Deutschland I chose to experiment with an improved CLI interface, around mediawiki-docker-dev, which a large number of our engineers were using at the time.

Tried to solve the following problems:

- Have to run all services even if you don’t want to

- Want to include more services than the standard set

- Bash scripts work, but are not friendly to devs or users

- Just cloning the mediawiki-docker-dev git repo is bad distribution

The experimentation involved writing a CLI interface in Symfony which:

- Switched from a single docker-compose file to 12 files each containing a set of services that fit into a logical group (eg. base (dns and proxy), and mediawiki)

- docker-compose files orchestrated using a control application that shells out to docker-compose

- From 9 to 4 services at startup

- CLI now a useful framework provided interface rather than pile of bash

- New functionality provided: adminer, fresh, quibble, composer

This resulted in:

- 12 separate docker-compose files

- PHP CLI interface

- Service groups:

- Base (DNS & Reverse proxy)

- MediaWiki

- Adminer / PhpMyAdmin

- DB (Optional replication)

- CLI tools (Composer, Fresh(npm), Quibble)

- Redis

- Graphite & Statsd

- Lifecycle

- Various setup bits

mwdd mw:createmwdd mw:install defaultmwdd dc:destroy

mwcli

Some WMF folks started a CLI tool with a development environment focus written in golang. I ported an improved version of my experimentation into this tool.

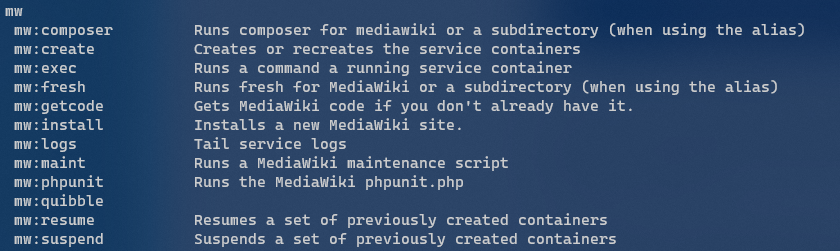

mwcli is now regularly released, tested in CI, has a clean interface and many other nice things. mwcli is also more than just a development environment, other commands make up the CLI such as basic commands for gerrit, gitlab, toolhub and codesearch.

(documentation, code repository, phabricator board, releases)

- 15 separate docker-compose files

- Familier CLI interface (written in Go)

- Service groups

- Base (DNS & Reverse proxy)

- MediaWiki (Apache & PHP FPM)

- Adminer / PhpMyAdmin

- SQL (Optional replication) / Postgres / SQLite

- CLI tools (Composer, Fresh(npm), Quibble)

- Redis / MemCached

- Graphite & Statsd

- Eventlogging

- Elasticsearch

- Mailhog

- Lifecycle

- Setup wizard

mw docker mediawiki createmw docker mediawiki install --dbtype=sqlite --dbname=defaultmw docker destroy

This comes with a bunch of flexibility & features:

- Single cross platform binary download (releases)

- Custom services can be added to a custom docker-compose file, accessible via the custom service group in the CLI (resolved phab task)

- Quickly & Easily run other PHP versions (docs)

- Remote debugging with XDebug (docs)

- Setup wizard that guides you through no MediaWiki -> cloned MediaWiki, port selection & more

- Prompts users to update when updates are available

- Continuous integration tests covering various full dev environment lifecycles

- Basic (but optional) automatic connection of services to MediaWiki on setup

- Inline help for services and commands

- more…

mwcli – Wizard in action

mwcli – MySql wiki in action

Kubernetes

Recently Wikimedia Deutschland has started working toward taking over the WBStack / WBaaS software components, setting up their own Wikibase as a Service offering. This code runs entirely on Kubernetes provisioned by terraform and helmfile in production.

The development environment for this platform runs using minikube, terraform, helmfile and skaffold.

Running an environment locally that mirrors production, using the same images, helmfiles, helm charts etc would look something like this:

minikube --profile minikube-wbaas --kubernetes-version 1.21.4

cd ./tf/env/local && terraform apply

cd ./k8s/helmfile && helmfile --environment local --interactive apply

minikube --profile minikube-wbaas tunnel &

cd ./skaffold && skaffold devSkaffold adds a critical missing piece to the puzzle, allowing quick changes to code of deployed services to be rebuilt or synced into running services within a Kubernetes cluster.

Final thoughts

- mwcli currently at version 0.8.1 and used by engineers at both WMDE & WMF

- Wikimedia continues to move towards Kubernetes

- Skaffold might fill one of the final gaps in terms of an “advanced” development environment that is near WMF production

- Everything we just saw could be bundled up in a CLI such as mwcli

- I don’t think there is a silver bullet in terms fo development environments here, and for different usecases we probably want different things. Probably a lighter weight docker-compose based environment, and also a more WMF production like Kubernetes based environment.