Last month I wrote a history of AI agentic coding, from my perspective, which heavily leaned on GitHub Copilot. One of the things that I have really appreciated over the years was the packaged cost of Copilot in comparison to the apparent cost of using per token prices APIs directly, or even the other packaged deals. However at the end of this month GitHub Copilot is moving to usage-based billing, and they now have a Copilot Billing Preview tool to allow you to compare what you have been paying vs what you will be paying in the future.

In my last post I took a look at my usage breakdown month by month, showing steady growth, and also shifts between the various models. All of that was mostly within the 10 USD per month plan (though this past month I have shifted to the 39 USD per month plan due to the new session and weekly token limits that people are complaining about online a fair bit (I haven’t actually seen a hint of these on the 39 USD per month plan)

However, next month this 39 USD is going to shoot up! And probably for good reason, as it looks like they might have been loosing a billion+ a month in recent months? (More on that below)

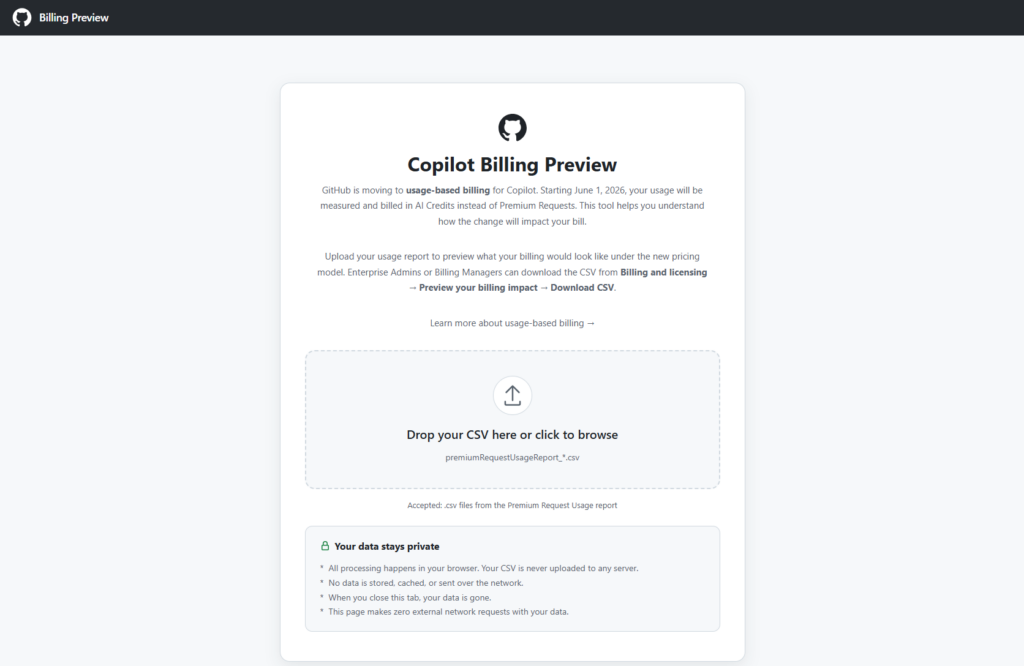

The tool is browser based, and just requires you to drop in a CSV file from the Premium request analytics of your account (which now has some additional fields). It then shows you various visualizations in the browser and extracts useful data from the more verbose report, including specifically some comparisons between your previous cost, and apparent future cost with AI credits instead.

Month comparisons

I went back and downloaded all of my new premium request usage report data for this year throughout which I slowly progressed from around 300 PRU per months (premium requests used) toward and past 600 PRU per month (largely due to the cloud agent usage increase. And in summary, this is what the difference between PRU based billing and AICS (AI Credit) billing looks like for me.

| Month | Plan | PRUs | AICs | Current billing (PRUs) | Usage-based billing (AICs) |

| January 2026 | Pro (10 USD) 300 PRU | 293.14 | 1,059.761 AICs | 10 USD | 10 USD |

| Feburary 2026 | Pro (10 USD) 300 PRU | 318.03 | 2,306.479 | 10.72 USD | 18.06 USD |

| March 2026 | Pro (10 USD) 300 PRU | 719.09 | 39,728.397 | 26.76 USD | 392.28 USD |

| April 2026 | Pro (10 USD) 300 PRU | 563.74 | 39,911.737 | 20.55 USD | 394.12 USD |

| 1/2 of May 2026 | Pro (10 USD) 300 PRU | 354.63 | 31,017.761 | 1/2 39 USD | 310.18 USD |

| Projected May 2026 | Pro+ (39 USD), 1500 PRU | 700 | 60,000 | 39 USD | 620 USD |