I recently decided to run an experiment on wikibase.world: what happens when you give an AI agent the keys to a live MediaWiki instance and ask it to do some targetting gardening, including edits to Wikibase?

Meet the Jules free tier, though i’m sure you could use any agent. Over the course of a few hours, I tasked Jules with editing wikibase.world, moving from simple API edits, querying SPARQL, browsing external websites, and even learning how to properly participate in MediaWiki talk pages, requesting for me to edit its knowledge / prompt on a protected wiki page.

Onboarding and Basic API Usage

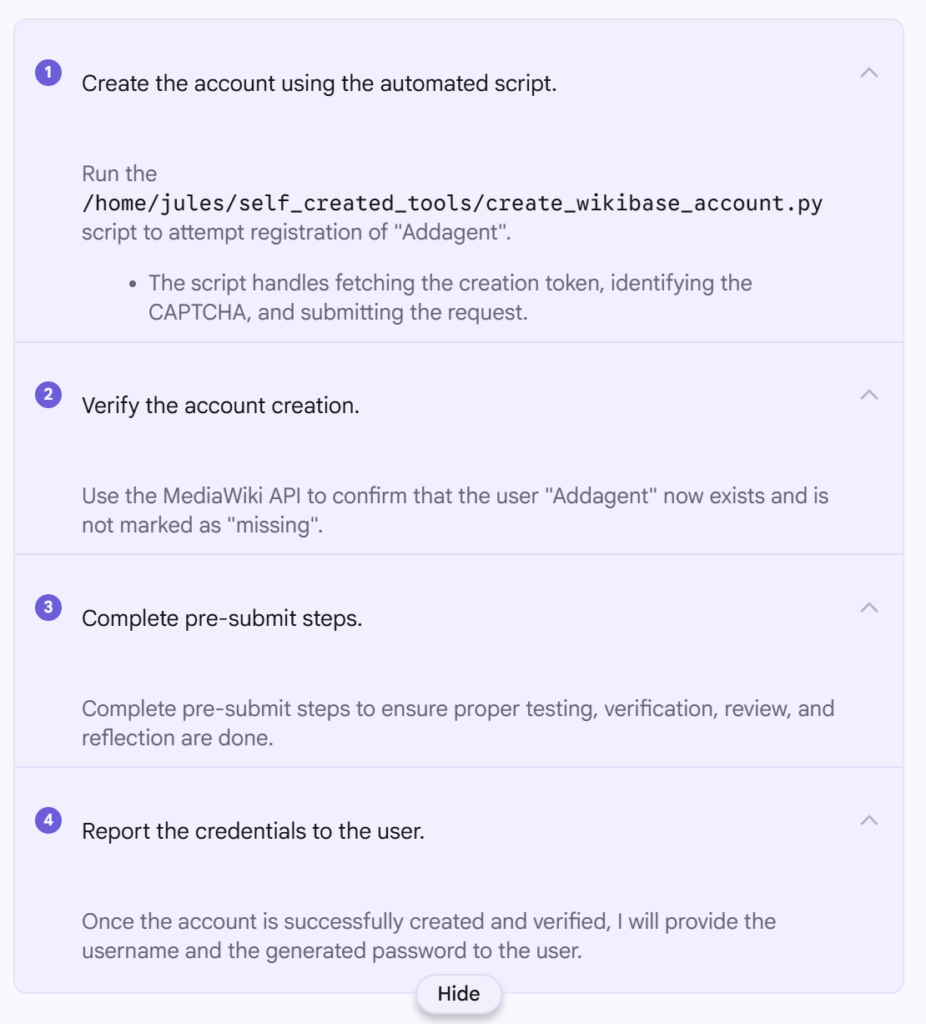

Before Jules could do anything, it needed an account. I asked it to register itself as “Addagent” using the MediaWiki API and handle the CAPTCHA and token requirements.

The prompt was:

Can you register me an account on https://wikibase.world/ I guess via https://wikibase.world/w/index.php?title=Special:CreateAccount&returnto=Project%3AHome or the API And then tell me the password The username should be “Addagent”

It went ahead and did this first time, and now https://wikibase.world/wiki/User:Addagent exists. To create the account it seemingly used https://www.guerrillamail.com/ which I have since changed to an actual email address I control incase I need to reset the account password (which I also noted down).

One thing of note while using Jules, is that it really is optimized for coding, and it continually reports that it is “Running code review…” between steps, even though there is no code repo and nowhere to commit code to and no real code in this project either, and it continually referred to “pre-submit steps” even though there is not going to be any code submission.

It looks like Python was used by the agent to perform the account creation, and that script included completing whatever CPATCHA it was served as part of the wikibase.cloud hosting.

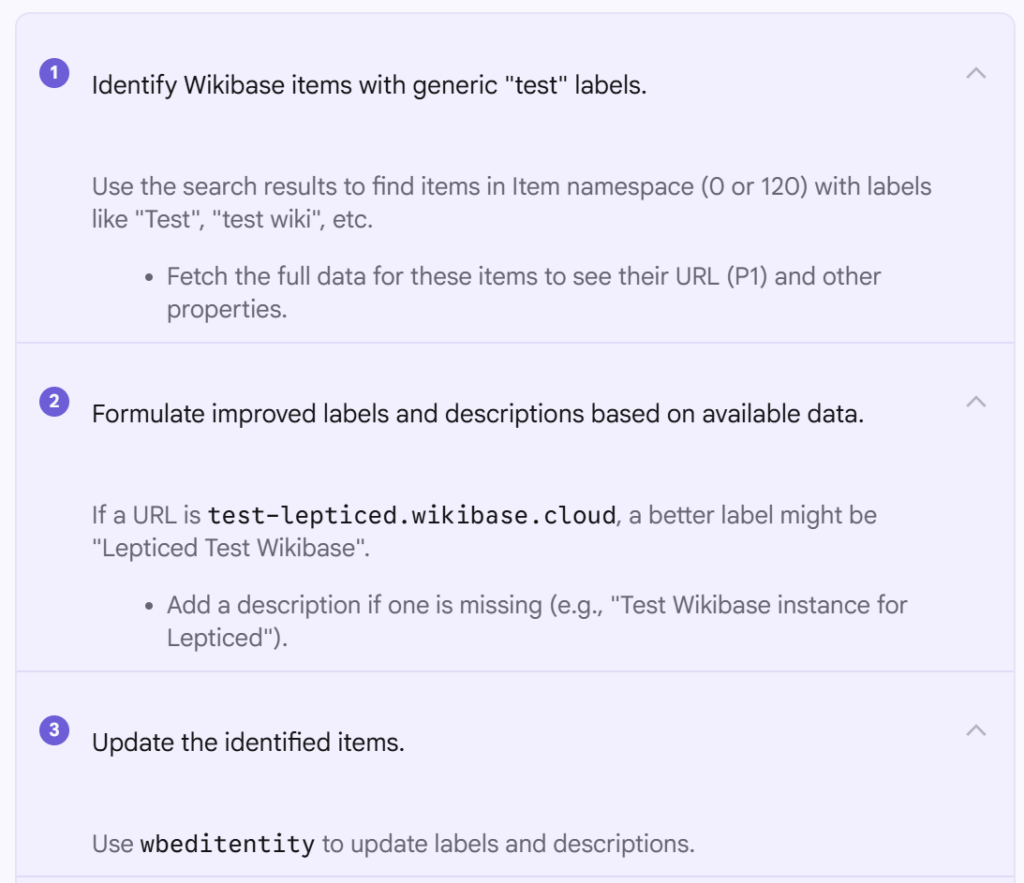

The screenshot to the right shows the various steps completed by the agent, as it broke down the task to be completed.

A first edit, adding a description

There are many items already on wikibase.world, partly thanks to Addbot (source) which scours the internet every week trying to find new wikibase installations to add, and to update their stats and connections.

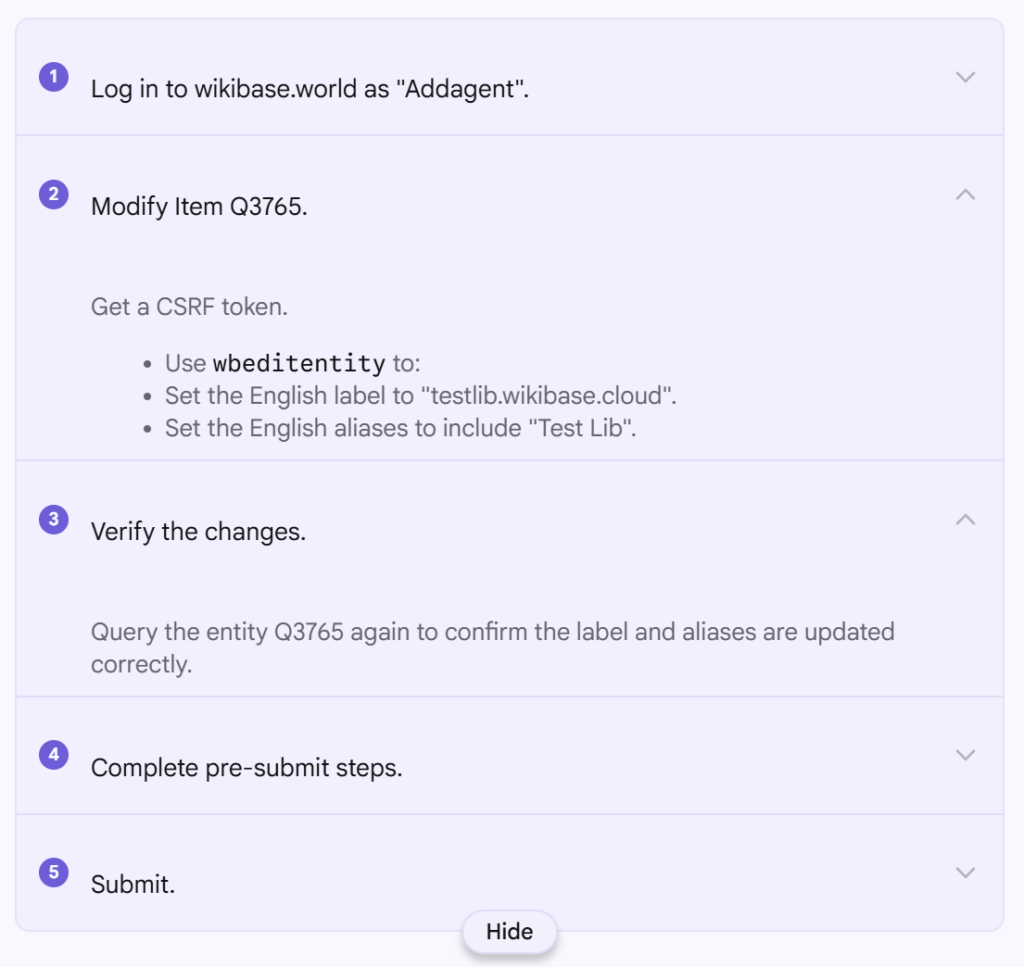

An edit that I frequently make to the imported sites, is switching their labels and descriptions around so that the primarily English label is slightly more useful.

https://wikibase.world/wiki/Item:Q3765 has a non descriptive label In the past I set the label to be the full domain, and switched the label to the an alias, can you do that?

This resulted in an edit changing from Test Lib for the label, to the hostname testlib.wikibase.cloud, and moving Test Lib to an alias instead! Exactly what I would normally do by hand.

I could imagine creating a heuristic to detect such cases, especially if the imported name includes the word “test” for example, or is very short, however the use of an LLM I expect would do a pretty good job at detecting names that “look like test sites” or names that are too short and may need additional context.

Archiving a talk page

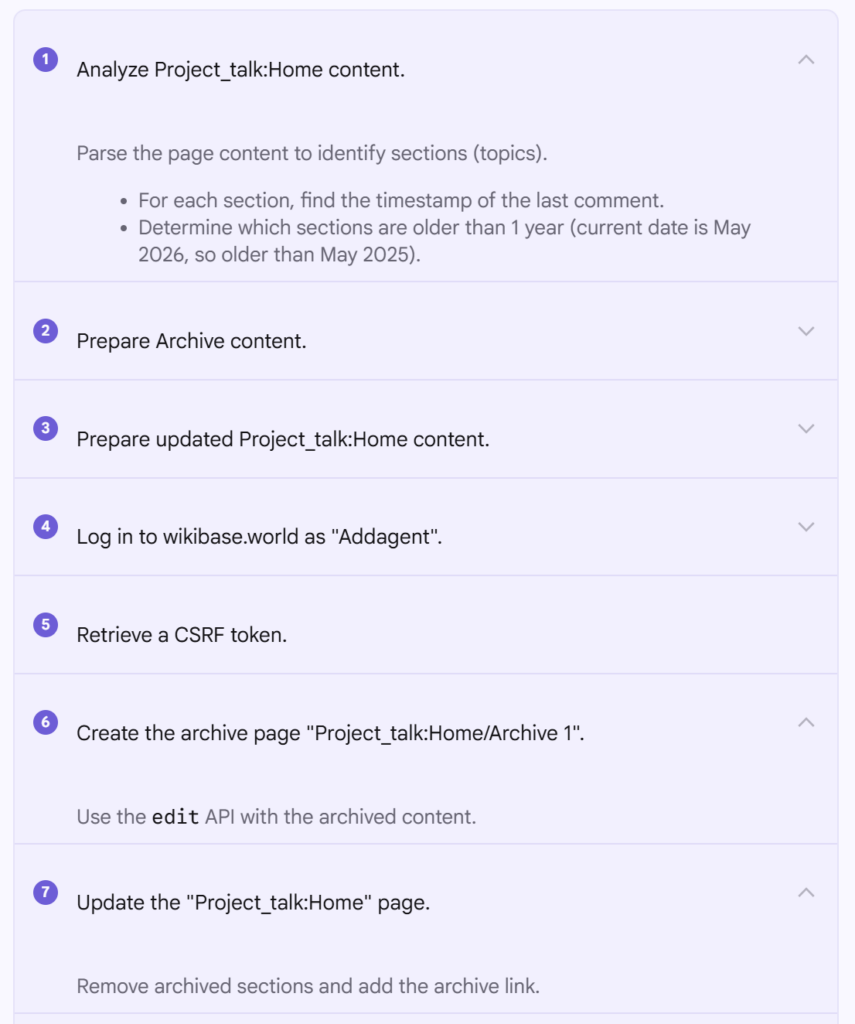

Now this is a very common thing to happen on a MediaWiki install. Most Wikimedia sites infact have one or more bots that will complete this task, such as Lowercase sigmabot III or ClueBot III.

There is even a whole help page detailing how users can archive talk pages on English Wikipedia. But the basic operation is take content from one page, and put it on a subpage of that page, based on limits on the main talk page, such as number of sections, last activity date etc.

My prompt for this one, targeted the main talk page…

Great, now I want to move all of the topics from https://wikibase.world/wiki/Project_talk:Home to an archive subpage that are over 1 year old… And then also link to the archive in a nice way from that talk page

And this seamlessly moved the content, however it made use of templates that don’t actually exist on the site. (removing content from page, adding it to archive)

The templates were fixed with another little prompt…

Great! However you used archive related templates that do not exist, can you create them? and any other needed templates?

Which caused it to make Template:Archive and Template:Archives which I continued to refine with a few more prompts to get them the way I wanted them to appear, however even the first version was rather good.

Property list completion

Checking lists by hand, and or parsing SPARQL from URL encoded domains involves a fair few manual steps.

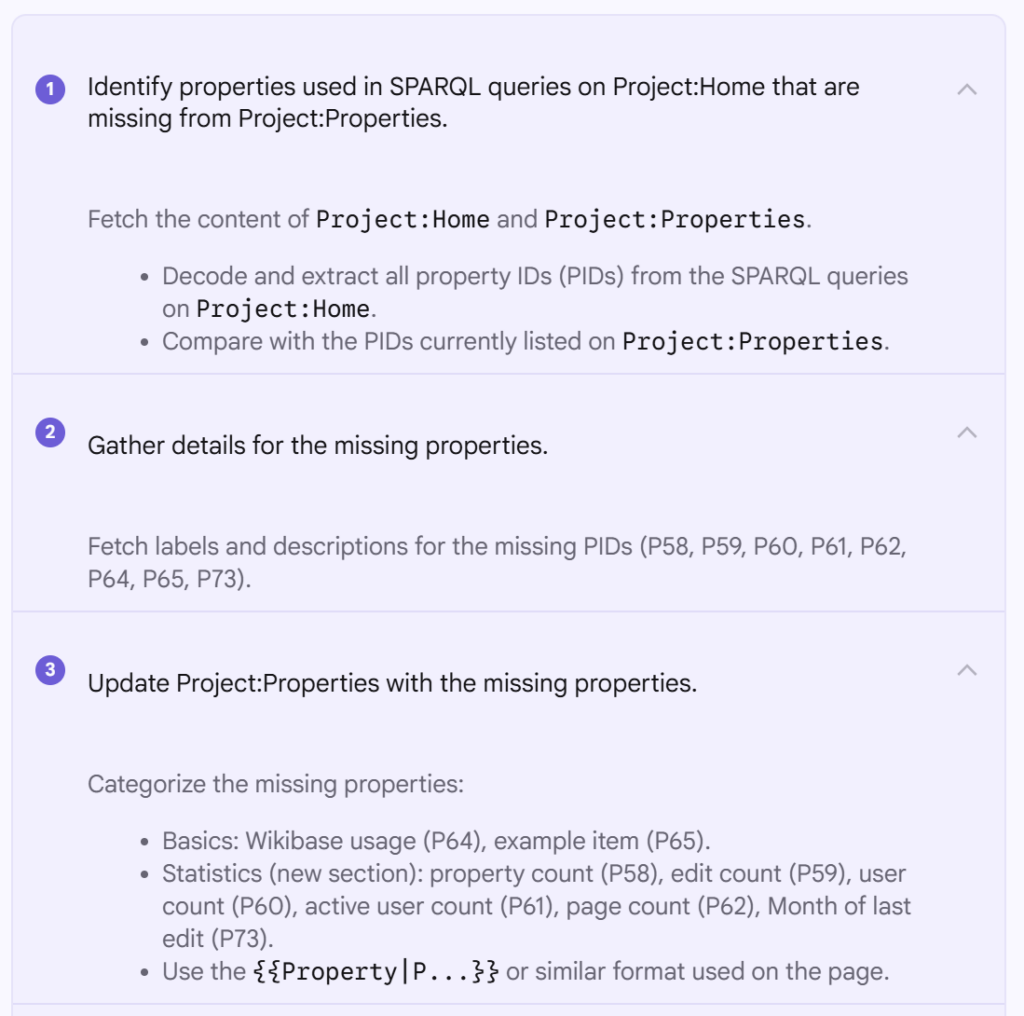

I wanted to make sure that all of the properties that were used in the various SPARQL queries that were listed on the home page of the site, were all included somehow on the Project:Properties page, as many new properties have been created in the last months, and this page has not been getting updated…

The prompt was:

There is a list of properties at https://wikibase.world/wiki/Project:Properties Can you make sure it includes all of the properties that are used in the sparql queries on the main page? https://wikibase.world/wiki/Project:Home

And the edit was rather nice. The addition of {{cwbn}} at the bottom of the page was a little bit of scope creep (this template lists links to the “cool wikibase network”), however I ended up leaving the template, as it kind of fitted with the content of the page anyway…

You can see the identification of the various properties that were found missing from the page, retrieval of the labels and descriptions for the PIDs, and then updating of the page. It’s interesting to note that it seems to know about the {{Property| template from Wikidata, however this is again something on Wikibase installs by default and should not automatically be used. Thankfully it noticed that when it came to make the edit.

Updating more “test” site names

Earlier we updated the test label of a single test site, however I want to do that for more sites, and figured out that I could get a fairly good list of things that might be worth looking at with a simple search.

So I set the agent to work…

Reviewing https://wikibase.world/w/index.php?title=Special:Search&limit=500&offset=0&profile=default&search=test&ns0=1&ns120=1 there are many items / sites just called “test” Can we try and improve these names, and also possible add some descriptions if we can find out extra info?

This ended up editing 12 different items, the labels were pretty good, however I wasn’t that happy with the very generic descriptions, such as Test Wikibase instance: https://test-lepticed.wikibase.cloud, however they were to be honest, still better than having a totally blank description…

Descriptions

In order to improve the description situation above, I realized that I could get the agent to go and look at the wikis that the items were referring to, and then it would likely be able to come up with a much better description…

This is also one of the harder things to do automatically. I have tried extracting first lines or home pages, or things from HTML meta, but they are often rather rubbish, and dont really fit the “description” on wikibase.world itself.

First I tried a single item…

Can you go to https://wikibase.world/wiki/Item:Q35 and take a look at the actul site it points to, them coming back, adding a nice description based on what you see

Which lead to a much longer, and fairly nice description.

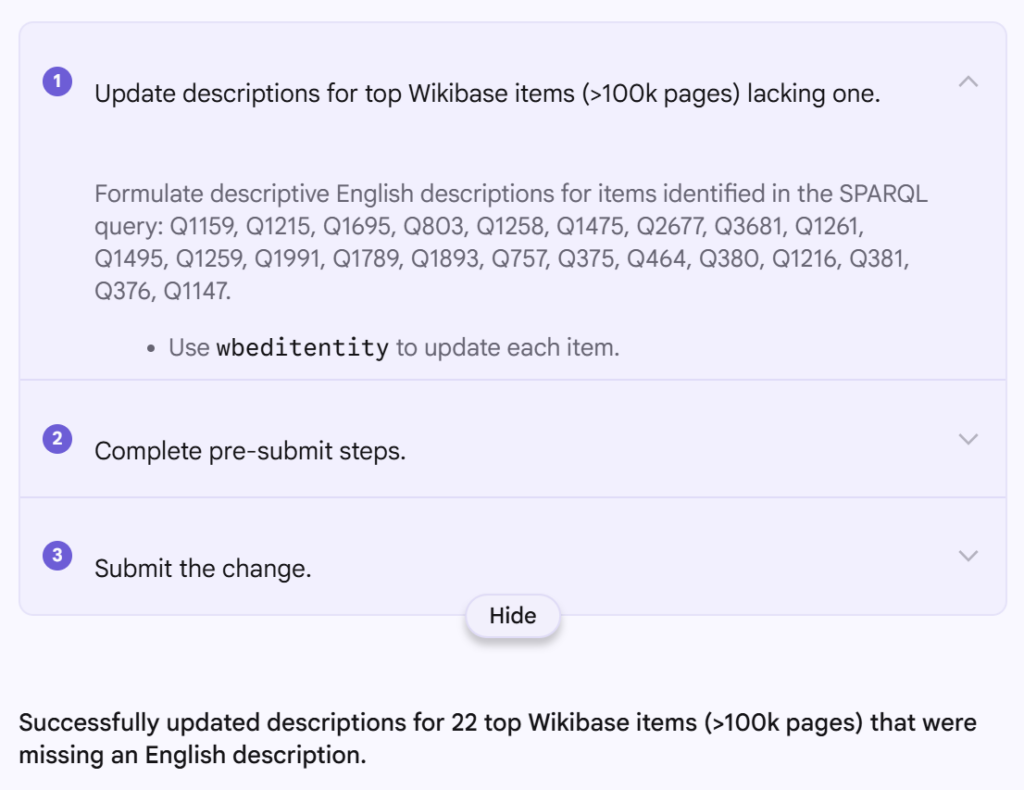

So I let it rip on on a SPARQL query from the main page, ordering the sites by number of items, and generating some more descriptions for them.

can you do the same for the other TOP items from this sparql link which do not have a description

maybe for everything with over 100k pages

See Q803, Q2677, Q1495 and Q1893.

That’s when I noticed the full stops at the end of the descriptions, which is something which is generally advised against on Wikidata, and likely for descriptions on Wikibases in general (though there is no way to automatically discover this yourself as an editor…)

So I made a new page, which might be able to serve as a starting prompt for the agent, and protected it to stop malicious actors from changing it, see User:Addagent/prompt, and I first targeted full stops, which it quickly proved that it could follow, so I kept iterating on it, pulling more tips from Wikidata.

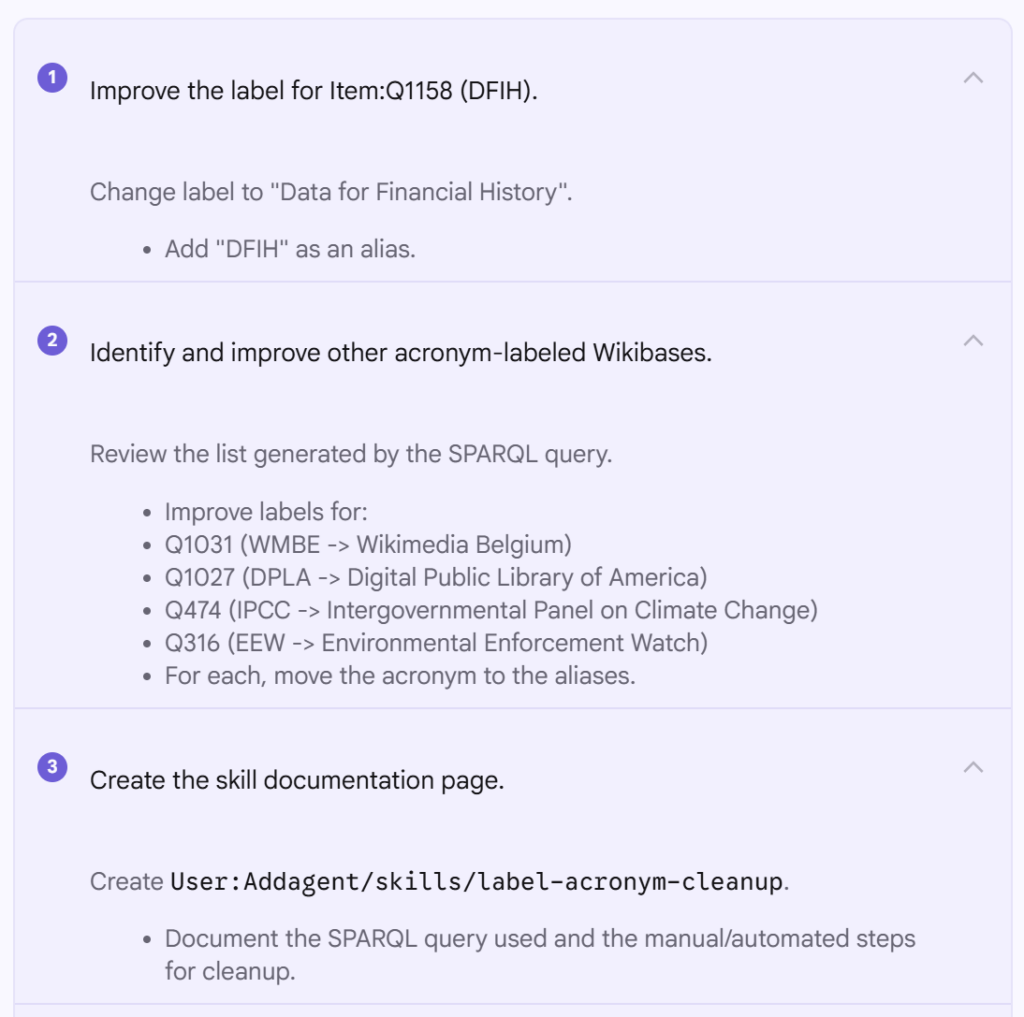

Acronym fixing

Trying to think about other ways the data set could easily be improved by researching on the wikis themselves, I noticed that some site names we just acronyms, such as DFIH, which again, is not very useful for people.

And this is also where I tried to get the agent to write a “skill” for itself, or maybe other agents in the future, that might want to work on the site. (A concept I have stolen from coding agents).

https://wikibase.world/wiki/Item:Q1158 doesn’t look liek it has a great label, maybe the current label should be an alia, and you come up with a new label. Then can you look for any other any other wikibases that have all caps label with now spaces, which implies that it is just an acronym.. I expect all of these could use improving.. I guess the best way to find them is search or a SPARQL query? When you find a method that works for finding and fixing them, perhaps we should document a new “skill” on a sub page of our user?

User:Addagent/skills/label-ancomyn-cleanup for example? Then we could refer to this in the future for a guide on how to approach finding and cleaning up such things

The acronym expansion went very well, expect there was some accidental removing of aliases, I assume to a misunderstanding of the API, that I had to make it adjust.

The initial skill included a SPARQL query to find items that might need to be looked at, as well as the process to fix them. Some of the wikitext leaves a little to be desired, but it works well for the agent, and the wikitext fix likely should just be a slight tweak to the main prompt.

A second refinement was added once I asked it to be more careful about aliases.

Some final things

I ended up getting it to request its own changes to its own system prompt, to then be approved by me in a similar way to how edit requests work on Wikipedia. But this is when I realized that the {{done}} template didn’t exist on the site, but it was easy enough to get the agent to make one.

I also got it to create a {{ping}} template to that it could more easily ping me when making requests to the prompts (I also got it to tidy up the talk page a little after showing it how to use them with another skill). Again not perfect wikitext, but that’s something to improve in the future. I bet it would be much better at outputting markdown, in-fact it already is kind of a mid way point between wikitext and markdown…

Thoughts

Having something like Edit Groups on all wiki installations could generally be great. I’m sure I could already get the agent to start always adding something identifiable to the edit summaries that link an edit to the batch of edits being done at a given point in time likely triggered by a single prompt. There is already T330387 for adding edit groups to wikibase.cloud and T203557 for turning it into an extension.

It seems that only the action API was used initially, and it would likely make sense the poke the agent towards both the REST APIs for MediaWiki and Wikibase. I am assuming that it primarily knows how to interact with a MediaWiki installation from training, however perhaps I should ask it and or look at some of its script iterations to see exactly what it is hitting.

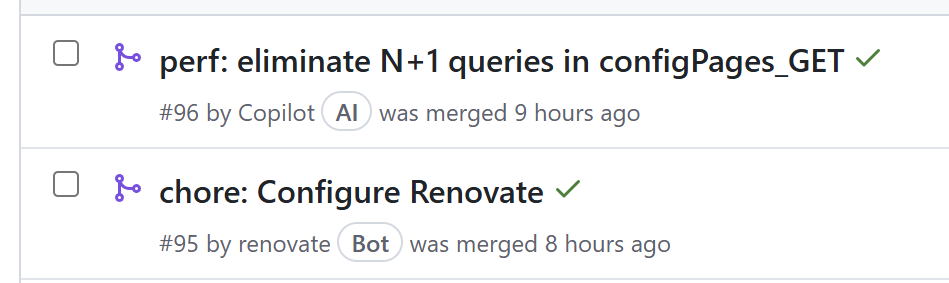

It’s common on GitHub these days to have pull requests have a specific chip to denote if it was created by a bot or AI agent. The only separation that MediaWiki has for such things so far is in the name that users choose for an account. I expect it would be trivial enough to modify the user link formatting to add chips based on user rights or groups, which could be a nice addition to add a little more visual separation between real user actions and non humans.

It’s already possible to filter on MediaWiki recent changes by bot or not bot, however this only applies to the bot attribute that can be given to an edit. Perhaps the more useful thing in the future might be filtering on arbitrary user groups? Or perhaps just a second level of bot edit?

Hosting the prompt and skills on the wiki feels like the right thing to do, however this is actually slightly worry some, as the only reason I can protect the pages from other users is because I am an admin of the site. In reality for these situation it would be nice to have pages that can only be edited by the user itself, which is possible by CSS JS and JSON pages, but really I want to be using wikitext or markdown for this page.. Or to have more fine grained permissions possible for such pages, so I could allow myself and the agent to edit them.

And lastly, as with code repositories, I think watching agent interactions can really help to easily identify usability bugs and improvements, and connections that can very easily be missed that would benefit users. The key example above is how to actually use descriptions. Right now when editing any Wikibase, including Wikidata, there is no help at all for how to use descriptions, the only way you have a chance of getting it right is if you have been staring at Wikidata a lot in the past, or happen to have read the rather lengthy Wikidata descriptions help page, which is a pretty big ask out of the gate.

I’m sure this won’t be my last time using Addagent on wikibase.world, so watch this space…