Earlier this week, someone asked me if they were perhaps late to making use of AI-assisted development, as they dove into it in the past 2 months (using GitHub Copilot) and are already seeing large gains in a small team in terms of leverage of time. I thought for a second and responded that they might have seen comparably worthwhile gains roughly a year ago. In this post, I’m going to take a look back over the past years to try and figure out what the timeline has actually looked like.

My own vauge memory isn’t very certain, and roughly speaking pre COVID I dont remember much AI being used in software development, and after COVID we were in the AI era? The first place I personally remember using assisted development was via the initial VSCode GitHub Copilot auto completions, which were at the time questionably useful to start with but still showed promise. Included along the way will likely be the first version of Claude Code, Gemini entering the scene, and within GitHub copilot the advancements from completions, to ask & edit, to agent, and finally autopilot and cloud agents.

2017 – 2022: The Transformer era

- June 2017: The “Attention Is All You Need” paper was published by Google, introducing the the Transformer architecture and paving the way for the era of transformer models.

- June 2018: OpenAI releases GPT-1.

- February 2019: OpenAI releases GPT-2.

- November 2019: Initial COVID19 outbreaks.

- March 2020: WHO declare COVID19 a pandemic.

- June 2020: OpenAI releases GPT-3. Developers discover its ability to generate HTML, CSS and code snippets despite not being trained for programming.

And although there are other notable mentions, such as BERT by Google in 2018 and CodeBERT in 2020, most of the above comes far before most people will have started looking at or using AI for coding, and that includes me. As I initially started using models during development with the introduction of GitHub Copilot and the autocompletions within VSCode.

GitHub Copilot Technical Preview (June 2021+)

My email innivation to the GitHub Copilot Technical Preview came back in on the 8th July 2021, and it looks like the public announcement on the GitHub blog can still be found dated 29th June 2021.

I got stuck in right away, as at that time VSCode was already my primary IDE, and wrote at least one blog post on 11 July 2021 where I looked at Wikidata through Github Copilot trying to hint the auto completions toward writing something meaningful for me.

At this time however, the completions were generally very hit and miss. You have the ability to cycle through a few variations and generally if you wanted to quickly template out a loop, or write a basic function this could indeed save you some time. But it would also get in the way of existing templating, completions and suggestions tooling leading to a degredated sitation in many other cases.

I believe Copilot left the technical preview, and was generally available from June 22 in auto completion mode.

Chat, starting with ChatGPT (November 2022+)

Although ChatGPT was a general primarily a general chat bot, this essentially opened the gates for the masses to try out what some in the know already knew, which is that GPT-3 could generate code. ChatGPT was closely followed by others, but ChatGPT, being the first to be free for folks to use obviosuly got the most immediate adoption.

I wrote a series of posts in March 2023 playing around with these various different chat bots and chat models (not specifically looking at code yet):

- What is Wikibase Cloud (according to ChatGPT)

- What is Wikibase Cloud (according to Bing AI)

- Asking Bing Chat AI to reference Wikidata

- What is Wikibase Cloud (According to Bard)

Throughout much of 2022 and 2023 I was mainly working from a boat, and thus I was generally doing less day to day development, hence I was a little light on funneling coding problems into the chat apps.

I did, and still do, use Gemini chat in the browser these days to fire many technical questions away to, and even get scripts and snippets made for me. Or for more complex document and source scanning (reading data sheets, comparing specs) I use NotebookLM.

GitHub Copilot Chat

In the June 2023 GitHub Copilot update the chat feature was introduced with a waitlist to sign up to, but had already been available to insiders for some period of time at this point.

It was quite fun to read through the monthly, and sometimes weekly changelog for Copilot, seeing that July 2023 brought the multiline input box, August 2023 brought chat an 8k context window and October 2023 brought mutli turn chat conversations, many of which seem so trivially important now looking back.

Context & Tools (November 2023+)

I’m not sure if Github Copilot was one of the first to introduce the concept of a coding agent, but prior to November 2023, GitHub Copilot was powered by GPT-3, and November brought GPT-4 into the mix, along with agents. My quick researching can find dates for various other agent modes and AI first IDEs, but all of the dates of those appear to be 2024 onward.

However, during this early agent time period I actually found the most productive way to use the VSCode integration was the inline context aware chats, so I’ll call this late 2023 period context and tooling, rather than just agents.

Agents (2024+)

The point at which I would actually consider GitHub Copilot to have a sort of agentic mode, was the point that a code editing session with multi file editing could be used, and that was in preview in October 2024.

There was certainly some movement in the agent space prior to this, including AI first IDEs such as:

- Early 2024: Devin could plan, browse the web, debug, and execute multi-step coding tasks inside its own secure sandbox

- November 2024: Windsurf IDE was lauched?

- February 2025: Preview launch of Claude Code

Anyway, tracking down the dates is hard, but from my view, Copilot got there first, the edit mode was great, and this is where things really started to ramp up, and I only really started hearing about folks really getting into Claude Code around 6 months after I was getting stuck in with Copilot edits.

That brings us to a Hackathon that I attended in May 2025 where I sat with a guy called Daanvr for some time looking at Claude Code vs GitHub Copilot and basically comparing workflows, costs and success while working on some little projects (some of which were silly little projects). I worked on some major upgrades to my WikiCrowd tool, but also made this silly MediaWiki extension which integreated an asteroid game with sunflowers, as well as making use of various, normally hard to get started with, MediaWiki concepts into the extension with a few simple prompts.

Daanvr was using Claude Code and I believe back then was still frequently topping up the account while getting the background Claude agent to iterate on his project, easily spending tens of dollars an hour? However, using the packaged and bundeled approach that GitHub has been using since the beginning, all of my experimentation was basically still within my monthly allowance.

I want to do a little look through the GitHub logs at the end of this post checking what my AI development spend has been since day 1, but I’ll save that for later.

If this hackathon showed me anything, it’s that I was personally already 4 years along this AI enhanced development journey, and others had not yet dipped their toe into the waters.

And, well worth the note, despite calling things “agents”, or at least using the term back in 2023, GitHub actually released their mode called “agent” mode in February 2025, which leveled up from the previous multi-file edit mode toward something that could “iterate on its own” and interact with more tooling.

Modelsplosion (2024+)

Now, I’m sure I have missed some of these, and these dates are not model release dates, or dates that they were released via some other coding system, but specificaly taken from the GitHub Copiolot changelogs, as Copilot was generally how I was trying out the new models.

- November 2024

- February 2025

- March 2025

gpt-4o-copilotagain?

- May 2025

- August 2025

- September 2025

- October 2025

- November 2025

- December 2025

And I think I’ll stop the listing of the modelsplosion at the end of 2025, as my eyes are blury after reading through all of those changelogs (yes I read them and didn’t just get an AI to do it for me…)

As part of trying to compare all of these models for my own benfit, and also compare the same models in a few different tools, I also tried them screen recording a run through of a golang kata with the same prompts each time in July 2025, and again in January 2026, where my overall TL;DR would be that there is a big difference between the older and newer models, and also between the larger and smaller models, things do continue to improve, pay per token costs silly money, and GitHub Copilot is still my favourite (Primarily when comparing Google Antigravity, Claude Code, GitHub Copilot).

And at some point as part of the agentic model explosion of 2025 – 2026 I would say the scales tipped for me, or the line was crossed, where I could mostly see the net gain and could imagine that others would too (such as the person that asked me, prompting this entire writeup). So I’m glad my guess of ~1 year was accurate, which looking at the timeline of models landing in Copilot, was around when the first Sonnet model landed!

Cloud (2024+)

The next wave of impact came through segregated agents, background working, and cloud setups. I believe that was first, and is maybe still, called GitHub Copilot Workspace coming available in April 2024. I’m not sure I jumped on this one quite as soon, primarily getting to grips with the cloud based agents as part of Google Jules from Google Labs which was working in December 2024.

Agents are most useful if you can have them fully roundtrip in a development environment, manually verifying their changes but also writing unit and or e2e tests that can be run as part of their session. This is no different with the cloud agents, except you have the additional exercise of setting up the project(s) quickly and efficiently for the agents to work on.

They have been supremely useful for small UI related jobs and tasks. Particuarlly, thoughts I have while out and about, or small tasks from the backlog that are already well scoped, specified, and unlikely to wiggle away in the wrong direction. I can already imagine a world where these could be automatically picked off a backlog specifically being tagged as ai-ready or such, and having PRs appear, and I have no doubt that some folks are already doing this.

Again, I’m sure there are examples of cloud agents running by other companies ahead of this time, but Copilot and Jules were what I was primarily looking at.

My GitHub Copilot usage

Although I have used the various versions of Copilot since 2021, they really didn’t have any idea how to bill it in the early days, and even once they did start billing it, usage metrics / request analytics were not added until late 2025.

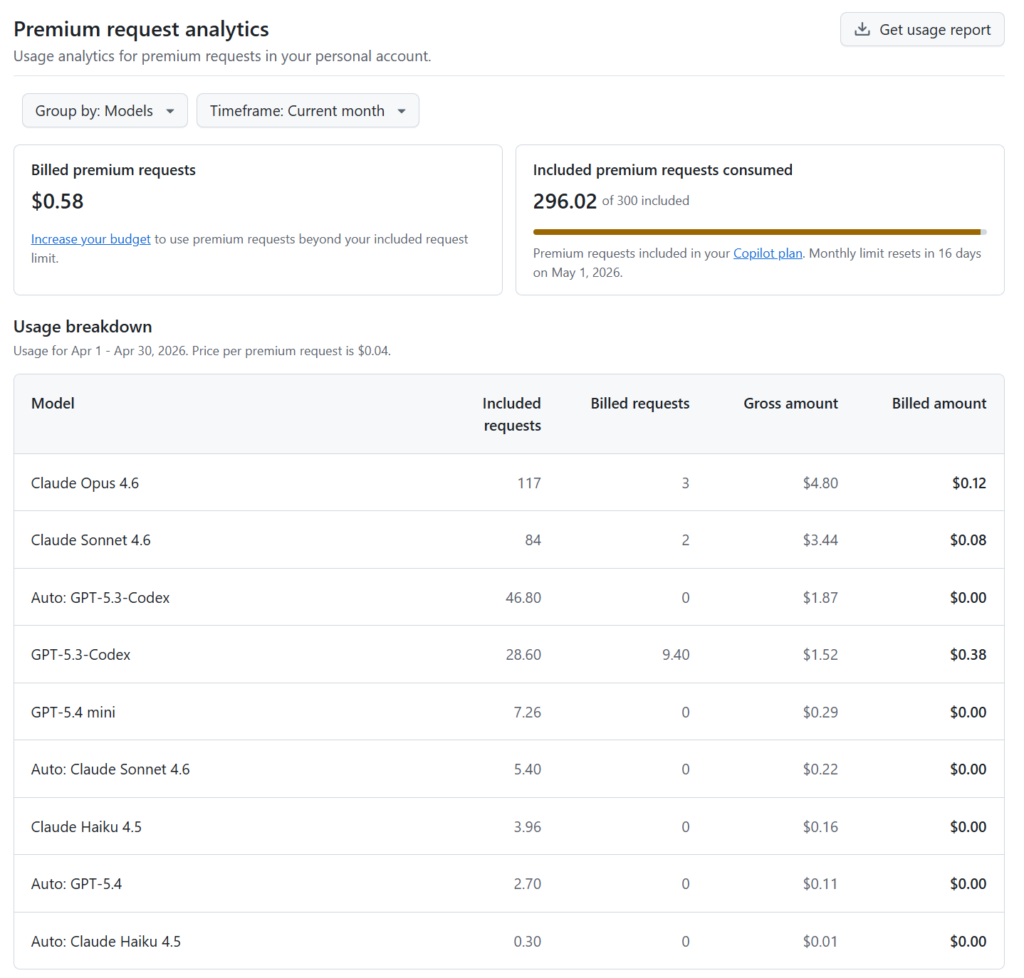

You can read more about the billing model on the docs site which is request based reather than token based, and models are billed with multipliers, generally of 0x, 0.33x, 1x, or 3x. A Pro subscription includes 300 requests (and is only 10 USD per month, with Pro+ being 39 USD with 5x the request limit), which would be 100 Claude Opus requests, or 300 GPT-5.3-Codex requests, for example. Spread across a month, thats roughly 10 Opus requests a day, or 30 GPT-5.3-Codex requests a day (every day). You can use the “Auto” model selection to get a 10% discount. Overage can be used to pay per request after the allowance in your plan, and the cost is $0.04 USD per request, which would be $0.12 USD per Opus request, for example (not bad when my experience of Opus in the early days was I could easily spend $5-10+ on a single prompt when using the per token API).

As I continue to lean into the heavier models, I certainly find it easier to break out of the 300 included requests in my current plan. The screenshot below is halfway through April 2026, with around 300 used, and I would likely end up using another 300 before month end? which would be an overage cost of 12 USD (I reached 16 USD overage for the first time last month).

You can only download summary usage data per file, and the file comes by email, so I opted for monthly files giving me monthly granularity, but I guess you could download these per day if you wanted more fine grained data… But for now monthly will do…

Usage is trending up, as is the diversity of models that I end up using in any given month.

Overall I used to prefer the GPT models, but have slowly been shifting over to the Claude models more and more? but am currently split about 50/50.

I really don’t like the auto mode where the model is chosen for me. I believe each model behaves quite differently, either the way it iterates, how quickly requests can end, or reliability in various situations, so far, I still appreciate that control. I know what task I can give to a “free”cost model, and what likely needs something more pricey..

And in terms of spend, I really have only just started bursting out of the Pro limits.

Monthly usage data

| month | GPT-5.3-Codex | Claude Sonnet 4.5 | Claude Haiku 4.5 | Claude Sonnet 4.6 | Claude Opus 4.5 | GPT-5.1-Codex-Mini | Claude Opus 4.6 | Gemini 3 Pro | Gemini 3 Flash | GPT-5.1-Codex-Max | GPT-5.2-Codex | GPT-5.4 mini | GPT-5.4 | Coding Agent model | Code Review model | Claude Sonnet 4 | GPT-4o mini | GPT-5.2 | Claude Sonnet 3.5 | Gemini 2.5 Pro | GPT-5 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 2025-10 | 0 | 225.9 | 83.16 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 2 | 0 | 0 | 0 | 0 | 1 | 0 | 0 |

| 2025-11 | 0 | 26 | 29.37 | 0 | 61 | 0.33 | 0 | 2 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| 2025-12 | 0 | 36 | 40.11 | 0 | 51 | 10.23 | 0 | 93 | 0.66 | 18.7 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 1 | 0 | 1 | 0 |

| 2026-01 | 0 | 77.9 | 128.76 | 0 | 72 | 1.32 | 0 | 0 | 0.66 | 3.6 | 0 | 0 | 0 | 0 | 0 | 2 | 1 | 0 | 0 | 0 | 0.9 |

| 2026-02 | 182 | 0 | 2.22 | 4 | 0 | 114.51 | 0 | 0 | 3.3 | 0 | 8 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| 2026-03 | 240 | 2 | 3.75 | 259.4 | 0 | 42.57 | 132 | 0 | 24.75 | 0 | 0 | 7.92 | 2.7 | 0 | 2 | 0 | 0 | 0 | 0 | 0 | 0 |

So, my spend, despite using AI assisted development now since 2021, is still only in the double digits in the past 5 years (well, it will be 5 years in a few months time), though I have a feeling we might reach tripple digits by the end of the year.

I’d love to try out Claude Code more, but the cost benefit analysis doesn’t seem that different to me, but perhaps I should spalsh out for a Pro subscription one month for a real comparison. But all I see online is folks hitting into and getting frustrated by the Claude rate limits and session limits? I have only managed to reach these once with GitHub Copilot so far, but perhaps everyone else is sending these agents into far deeper and longer loops than I am, or have the mental capacity to juggle even more flows and projects than me.

What’s next for GitHub Copilot

I actually hit into my first every GitHub Copilot session rate limit last week on my Pro plan, despite in my opinion being a fairly heavy user. And the past couple of weeks have brought some interesting new about the GitHub Copilot rate limits…

On 10th April they said they were “Enforcing new limits”, with some reports of a bug that lead to undercounted token usage, which now leads to users hitting their subscription allowances faster. On the same date they also paused “new GitHub Copilot Pro trials” due to abuse. And on 20th April there is another post talking about individual plan usage limit tightening, which helpfully includes some details about how the limits work.

GitHub Copilot has two usage limits today: session and weekly (7 day) limits. Both limits depend on two distinct factors—token consumption and the model’s multiplier.

The session limits exist primarily to ensure that the service is not overloaded during periods of peak usage. They’re set so most users shouldn’t be impacted. Over time, these limits will be adjusted to balance reliability and demand. If you do encounter a session limit, you must wait until the usage window resets to resume using Copilot.

Weekly limits represent a cap on the total number of tokens a user can consume during the week. We introduced weekly limits recently to control for parallelized, long-trajectory requests that often run for extended periods of time and result in prohibitively high costs.

The weekly limits for each plan are also set so that most users will not be impacted. If you hit a weekly limit and have premium requests remaining, you can continue to use Copilot with Auto model selection. Model choice will be reenabled when the weekly period resets. If you are a Pro user, you can upgrade to Pro+ to increase your weekly limits. Pro+ includes over 5X the limits of Pro.

Usage limits are separate from your premium request entitlements. Premium requests determine which models you can access and how many requests you can make. Usage limits, by contrast, are token-based guardrails that cap how many tokens you can consume within a given time window. You can have premium requests remaining and still hit a usage limit.

How usage limits work in GitHub Copilot @binderjoe github.blog – April 20, 2026

I wonder if I will feel the affects of these changes in the coming weeks or months, and I wonder if that will lead to a plan change or trying out Codex or Claude some more..

27 April Edit

Within a week of initially writing this blog post, it’s all change, all change time, after the initial reports, it’s official and things will be changing on June first.

What’s changing on June 1 (for buisness and enterprise anyway)

- Premium request units, or PRUs, will be replaced by GitHub AI Credits which are a monthly allotment of credits consumed based on token consumption (input, output, and cached tokens) according to the listed API rates per model. This change aligns Copilot pricing with actual usage and is an important step toward a sustainable, reliable Copilot business and experience for all users.

- Copilot code review’s agentic architecture runs on GitHub Actions. Copilot code review will now consume GitHub Actions minutes, in addition to GitHub AI Credits. These minutes are billed at the same per-minute rates as other GitHub Actions workflows.

I’m yet to get an email to my personal account, but I can’t imagine it will stray too much from the above? OR they will keep it as is, but heavily enforce the session rate limits perhaps? (Which they already appear to be doing).

It does feel like the IDE integration of AI is starting to become more of a gateway to the cloud integrations now rather than the core use case. I’m using Jules, Copilot cloud, and now devin.ai regularly, and plan to expand the scope of my cloud agent usage for development in the coming months (given the GitHub changes), if for no other reason to make sure i’m aware of what else is out there, and how it feels.

2 thoughts on “Late to “AI” assisted development?”