2019 Update: This script now exists with an easy to use GUI

Back in 2016 I wrote a short hacky script for taking HTML from facebook data downloads and adding any data possible back to the image files that also came with the download. I created this as I wanted to grab all of my photos from Facebook and be able to upload them to Google Photos and have Google automatically slot them into the correct place in the timeline. Recent news articles about Cambridge Analytica and harvesting of Facebook data have lead to many people deciding the leave the platform, so I decided to check back with my previous script and see if it still worked, and make it a little easier to use.

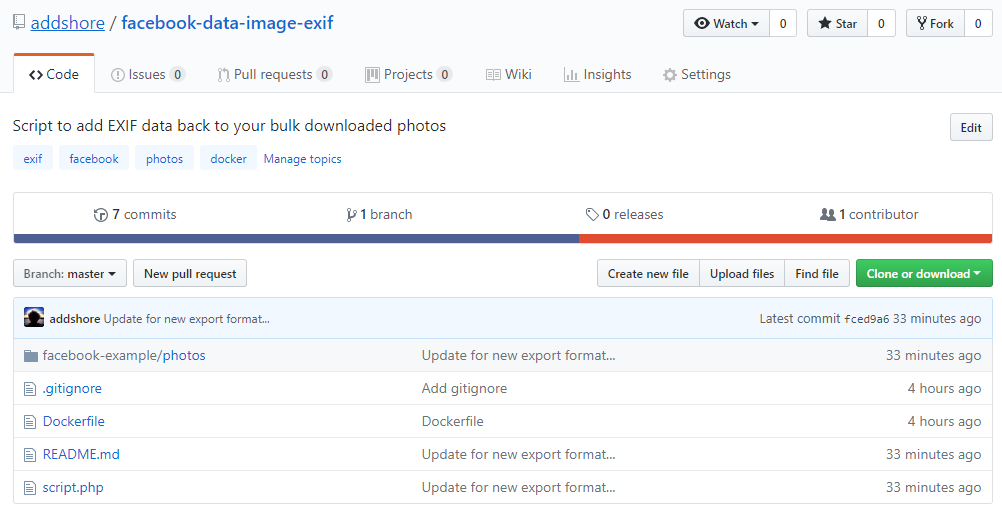

Step #1 – Move it to Github

Originally I hadn’t really planned on anyone else using the script, in fact I still don’t really plan on it. But let’s keep code in Github not on aging blog posts.

https://github.com/addshore/facebook-data-image-exif

Step #2 – Docker

The previous version of the script had hard coded paths, and required a user to modify the script, and also download things such as the ExifTool before it would work.

Now the Github repo contains a Dockerfile that can be used that includes the script and all necessary dependencies

If you have Docker installed running the script is now as simple as docker run --rm -it -v //path/to/facebook/export/photos/directory://input facebook-data-image-exif.

Step #3 – Update the script for the new format

As far as I know the format of the facebook data dump downloads is not documented anywhere. The format totally sucks, it would be quite nice to have some JSON included, or anything slightly more structured than HTML.

The new format moved the location of the HTML files for each photos album, but luckily the format of the HTML remained mostly the same (or at least the crappy parsing I created still worked).

The new data download did however do something odd with the image sources. Instead of loading them from the local directory (all of the data you have just downloaded) the srcs would still point to the facebook CDN. Not sure if this was intentional, but it’s rather crappy. I imagine if you delete your whole facebook account these static HTML files will actually stop working. Sounds like someone needs to write a little script for this…

Step #4 – Profit!

Well, no profit, but hopefully some people can make use of this again, especially those currently fleeing facebook.

You can find the “download a copy” of my data link at the bottom of your facebook settings.

![]()

I wonder if there are any public figures for the rate of facebook account deactivations and deletions…

Hi, this is great but I have absolutely no programming experience – is there either an easier way or a step by step guide that you could make please? ‘Run the script with the correct things passed’ makes no sense to me and I’ve never used Docker! Thanks!

I did want to try and make and even easier to use version.

What OS are you running?

Perhaps I could rewrite it in something a little better…

Either Mac or Windows 7 would be fantastic. I used a website to download all of my FB photos but there are no dates on any of them and even when I found a website that supposedly did download the EXIF data too it didn’t work and 95% of my ‘tagged in’ photos weren’t visible! Thanks

Take a look at https://addshore.com/2019/02/add-exif-data-back-to-facebook-images-0-1/ :)

I’m really want to download all my facebook images with EXIF data, but I have no programming experience.

Have you been able to make a more user-friendly way to do this, than github?

After continued and increased interest I started writing a better version yesterday.

Shouldn’t be long now!

From my basic testing the small Java version works.

You can find a link to the download @ https://github.com/addshore/facebook-data-image-exif/pull/2#issuecomment-459349985

Sorry there are not docs yet, but once you run the jar you need to point one place to the photos_and_videos directory of your extracted dump and the other one to a copy of https://www.sno.phy.queensu.ca/~phil/exiftool/.

I’ll work on a proper write up and docs in the next 24 hours.

Just curious if you’ve had a chance to put together a write-up for this yet. I tried installing docker but am having trouble running the script. My programming experience is pretty limited so I’m sure I’m making a pretty simple error…

No write up yet, but the comment above includes most of the details you need:

I’m going to have another look at finishing this off this weekend. (Gotta fix the UI freezing thing)…

Hey thanks for the help! I was able to get a lot further but looks like I’m still getting stuck somewhere. When I run the Java tool, the UI tells me it found my albums but then it appears to just stop. I think I may not be pointing the 2nd text box to the exiftool correctly… I unzipped the tool (twice, first to a .tar and then to the component files) and tried pointing directly to the file itself as well as just its directory but it doesn’t appear to ever move past finding the albums. In other words, the java UI just says:

“Processing… Looking for Albums… 17 albums found!”

After that, it doesn’t appear to do anything. It doesn’t really appear to be a frozen UI so much as just it stopped after finding the files (although I could definitely be mistaken).

To be clear, for the second box I’m pointing to a directory like this:

C:\Users...\Image-ExifTool-11.26.tar\Image-ExifTool-11.26\Image-ExifTool-11.26

I’ve also tried

C:\Users...\Image-ExifTool-11.26.tar\Image-ExifTool-11.26\Image-ExifTool-11.26\exiftool

Any thoughts on what I’m missing? Thanks agian!

I did a tiny bit more work on this in the past week.

I have just uploaded a slightly newer build to https://github.com/addshore/facebook-data-image-exif/releases/tag/0.1-rc3-(20190201-181100)

This still has the UI lockup issue (as I just haven’t written it right)

Read the notes on that link, even though it is crappy, it should work for you, but not many people have tested it yet.

If the UI locks up and it isn’t writing to any files, then you’ll just have to keep an eye out for when I fix it!

EDIT: so the the freezing issue actually meant nothing worked, I should have a new working / final version in the next 2 days with documentation and everything…

Take a look at https://addshore.com/2019/02/add-exif-data-back-to-facebook-images-0-1/ :)

Take a look at https://addshore.com/2019/02/add-exif-data-back-to-facebook-images-0-1/ :)